Why we built Autotune

How do we know which signals matter? And when should we hand an account over to sales?

We’ve been asked these questions time and time again by our customers. Some PLG companies with large data teams dedicate time to developing models to answer these questions. But most of the time, resources are scarce and GTM teams are left on hold in a backlog of data science requests.

We built Autotune to decouple GTM innovation from months-long data science projects, and do it at a lower cost so teams can operate more efficiently. This sets our customers up for success where data science can focus on core IP and GTM teams can focus on moving the needle on revenue growth without having to wait or burn cash on expensive headcount.

We built Autotune to be flexible, knowing that the level of internal analysis would vary. When we started working with Figma, their internal data scientists had already built out models and identified key product signals for their GTM teams to focus on. We maintain those as the key Signals surfaced to their reps, and use Autotune to support and complement those existing analyses.

Why data science vs simple analysis?

A data science approach is particularly valuable because product signal identification requires analysis of a large quantity of data that is constantly changing with the ability to compare multiple attributes to each other.

Rules-based scoring or simple analysis misses the opportunity

Doing this in a pivot table is impossible, and it can be difficult to take an unbiased view of your data. Simple analysis starts with a human filtering a data set on what they want to look at, which inherently could be missing key product signals.

How Autotune works

Autotune is an advanced ML feature that analyzes your historical product and conversion data to identify product Signals that show highest propensity to conversion– allowing GTM teams to optimize how they use human interactions to drive efficient growth.

Step 1: Analyze past conversions from your product data

Autotune identifies Signals by learning from conversions within your product, specifically looking at how and when customers convert between product tiers (e.g., Trial to Paid).

Conversion History and Autotune

Step 2: Recommend highest propensity product Signals

Autotune’s ML models are trained with a hyperparameter search based on all fields available in Endgame, producing a set of recommended Signals with corresponding conversion rates. Endgame Admins can select the best suited model based on the desired degree of precision (e.g., fine vs. coarse tuning).

Signal Configuration and Autotune

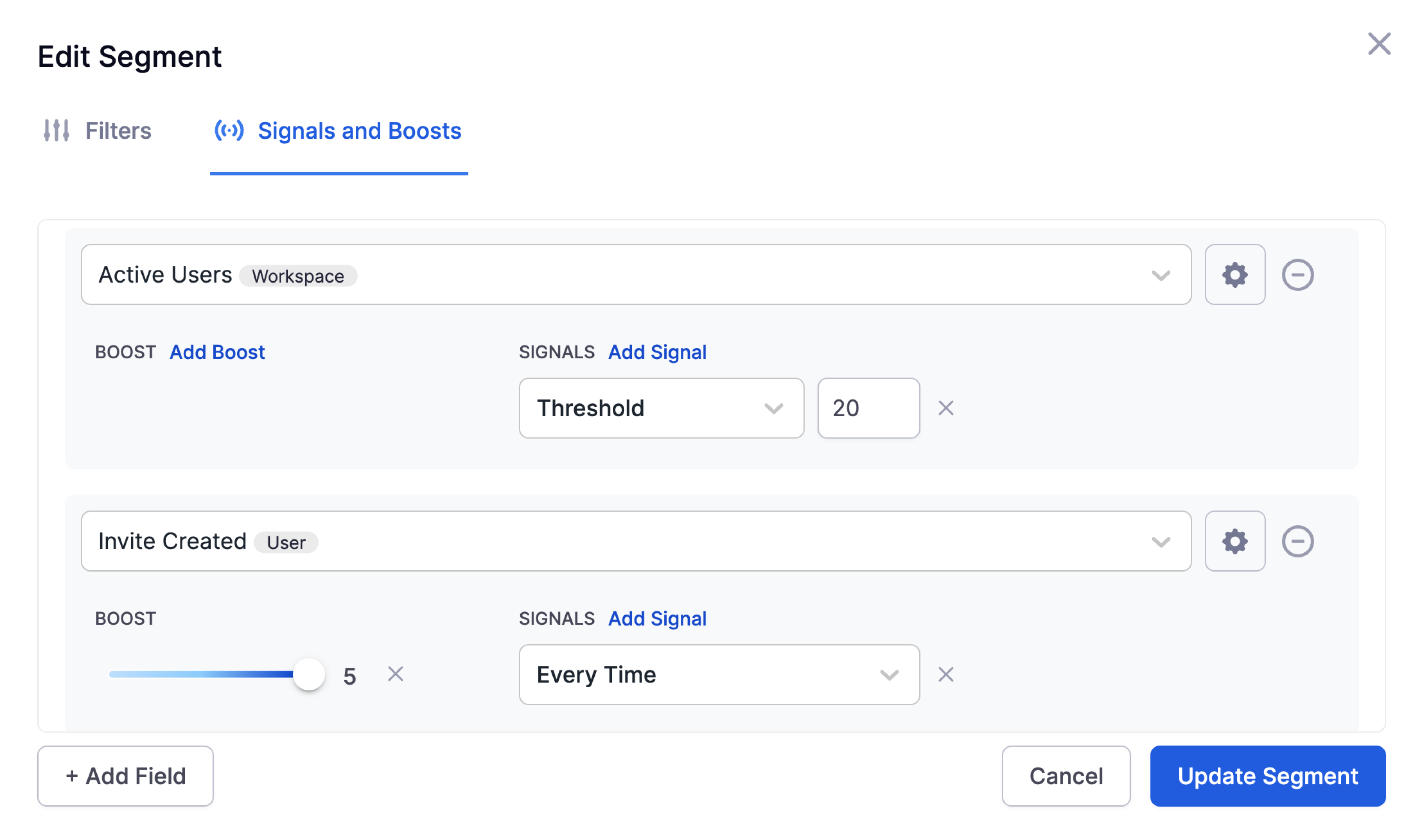

Step 3: Fine tune your GTM plays

These outputs can then be used to optimize how different Segments (typically plan tiers, e.g. free, paid, pro, enterprise) are configured and how each Signal is boosted to calculate Signal Strength. This is the foundation of defining when and how humans are incorporated into the customer journey. With Autotune, now PLG companies can use ML to draw that line and operationalize their GTM plays by building Views that target the Signals that matter.

Step 4: Validate and iterate

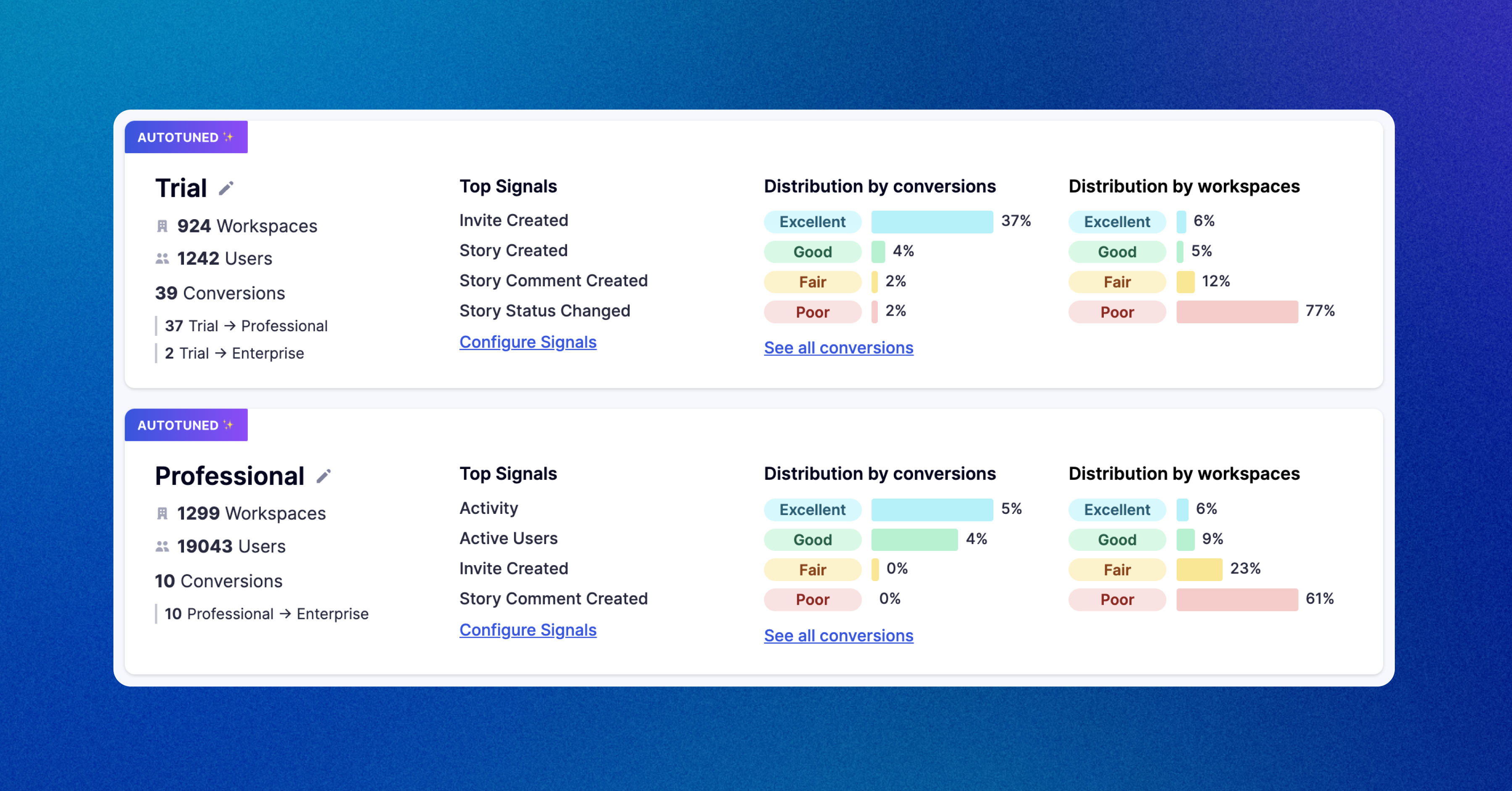

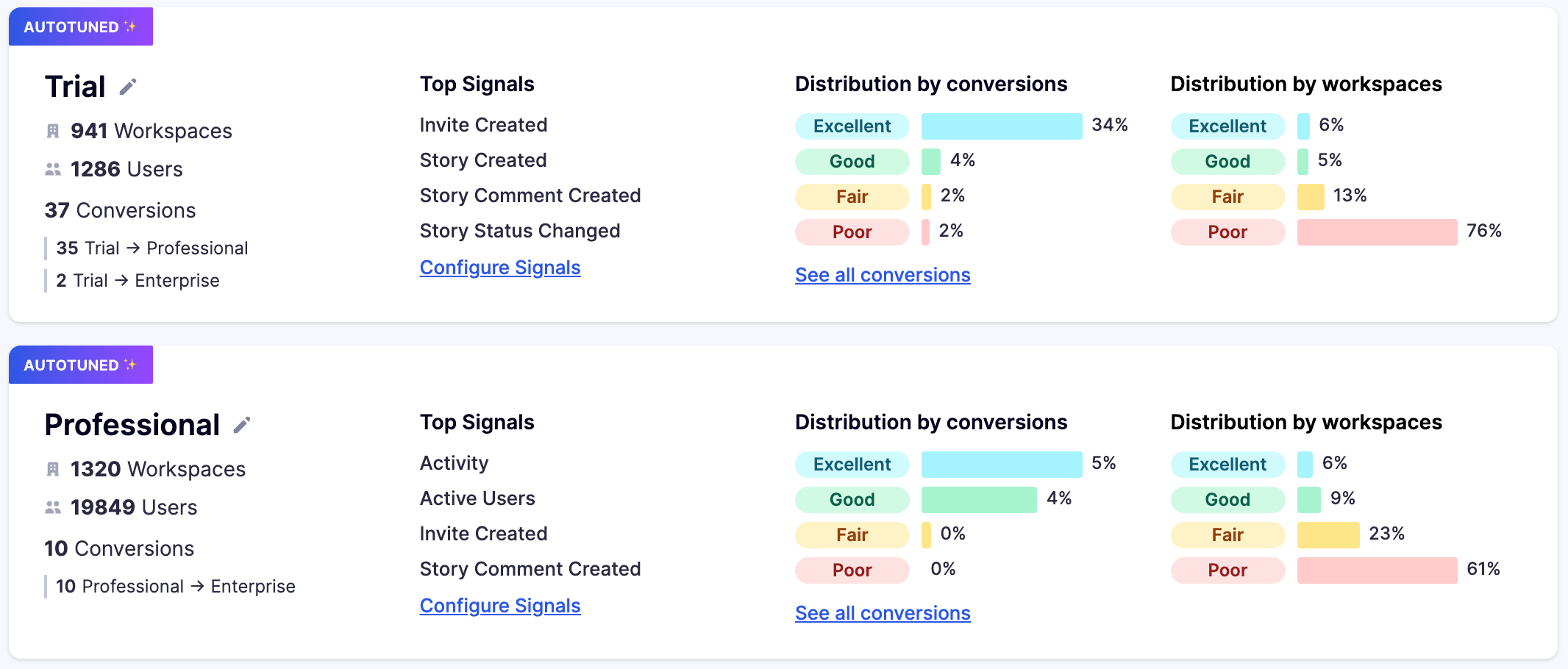

Once configured, admins can check back in on the Segments page to see how conversions look each day, and adjust Signals as you wish, rerunning Autotune as the market or your pricing and packaging shifts.

How successful customers use Autotune

Customer journey optimization

GTM teams use Autotune to optimize the customer journey by tailoring Signals and Signal Strength based on Autotune recommendations. This streamlines the conversion path between product tiers and helps you understand what the best users are doing and why they convert better.

ML-driven PQA alerting

Sales teams use Autotune to power proactive notifications for high leverage accounts (PQAs). Alerts can be surfaced directly in Slack each day or in the Feed based on the recommended Signals from the Autotune model. This gives reps a real-time stream of high-leverage Signals in their territory with the context to take action compared previously using black-box PQLs.